This Item Ships For Free!

Pytorch c++ inference hotsell

Pytorch c++ inference hotsell, Accelerating Inference Up to 6x Faster in PyTorch with Torch hotsell

4.74

Pytorch c++ inference hotsell

Best useBest Use Learn More

All AroundAll Around

Max CushionMax Cushion

SurfaceSurface Learn More

Roads & PavementRoads & Pavement

StabilityStability Learn More

Neutral

Stable

CushioningCushioning Learn More

Barefoot

Minimal

Low

Medium

High

Maximal

Product Details:

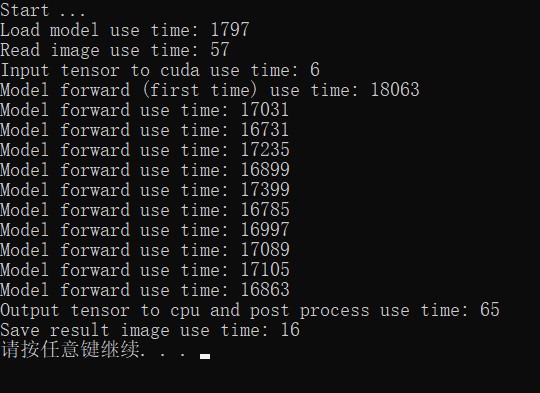

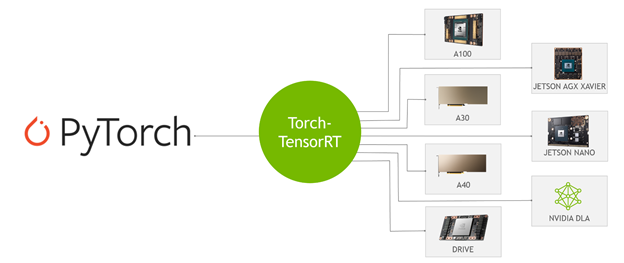

PyTorch 2.0 Our next generation release that is faster more hotsell, Porting Pytorch Models to C Pipelines that Port Pytorch Models hotsell, Torch.load model hangs indefinately when invoked via python hotsell, Renato Violin on X hotsell, Error when building pytorch1.7.0 from source PyTorch Forums hotsell, PyTorch 2.0 PyTorch hotsell, Welcome to PyTorch Tutorials PyTorch Tutorials 2.2.1 cu121 hotsell, Increase efficiency using PyTorch GPU for inference Ray Core Ray hotsell, Introducing the Intel Extension for PyTorch for GPUs hotsell, How To Run Inference Using TensorRT C API LearnOpenCV hotsell, CPU x10 faster than GPU Recommendations for GPU implementation hotsell, PyTorch 1.0 tracing JIT and LibTorch C API to integrate PyTorch hotsell, Updates Improvements to PyTorch Tutorials PyTorch hotsell, Heap size increase constantly when inference with new thread C hotsell, How to use TensorRT C API for high performance GPU inference by Cyrus Behroozi hotsell, Help building cpp code with opencv C PyTorch Forums hotsell, Loaded TorchScript Model in C and inference taken longer time hotsell, Speeding Up Deep Learning Inference Using TensorRT Edge AI and hotsell, Accelerating Inference Up to 6x Faster in PyTorch with Torch hotsell, C libtorch forward much slower than python C PyTorch Forums hotsell, PyTorch in C Quickstart hotsell, PyTorch Model Inference using ONNX and Caffe2 LearnOpenCV hotsell, Accelerating Inference Up to 6x Faster in PyTorch with Torch hotsell, C Inferencing using Torchscript Exported Torchvision model Erorr hotsell, Deploying PyTorch Model into a C Application Using ONNX Runtime hotsell, Accelerating Inference Up to 6x Faster in PyTorch with Torch hotsell, Deploy PyTorch 1.0 into production Flask Python vs NodeJS C hotsell, GitHub nesajov fastai pytorch cpp inference PyTorch 1.0 hotsell, A Taste of PyTorch C frontend API by Venkata Chintapalli hotsell, How To Run Inference Using TensorRT C API r pytorch hotsell, python Pytorch C Libtroch using inter op parallelism hotsell, Deploying PyTorch Model into a C Application Using ONNX Runtime hotsell, GitHub Wizaron pytorch cpp inference Serving PyTorch 1.0 Models hotsell, GitHub BIGBALLON PyTorch CPP PyTorch C inference with LibTorch hotsell, Deploying PyTorch Model into a C Application Using ONNX Runtime hotsell, Product Info: Pytorch c++ inference hotsell.

- Increased inherent stability

- Smooth transitions

- All day comfort

Model Number: SKU#7591692